Depth Scan of Featureless Walls with a Robotic Manipulator System

Supervised by Dr. Zuria Bauer, Dr. Hermann Blum, Dr. Selen Ercan, and Pierre Chassagne, under Prof. Dr. Marc Pollefeys at the Computer Vision and Geometry Group.

Why Is Scanning a Flat Wall So Hard?

3D scanning sounds like a solved problem — until you try it on a plain white wall. Most scanning and registration methods rely on geometric or textural features to align overlapping scans. A brick corner, a door frame, a pipe — these give algorithms something to latch onto. But construction walls — especially freshly plastered or prefab panels — are essentially featureless planes. There’s nothing distinctive for ICP or any other registration algorithm to match against.

This project was motivated by a collaboration with LAYERED, a startup building autonomous robotic systems for wall-mounted plastic spraying. Their application needs precise 3D reconstructions of wall geometry to ensure uniform material deposition. The question was: can we get accurate, dense depth scans of featureless walls using a robot arm with a Time-of-Flight camera, and what are the limits?

The Setup

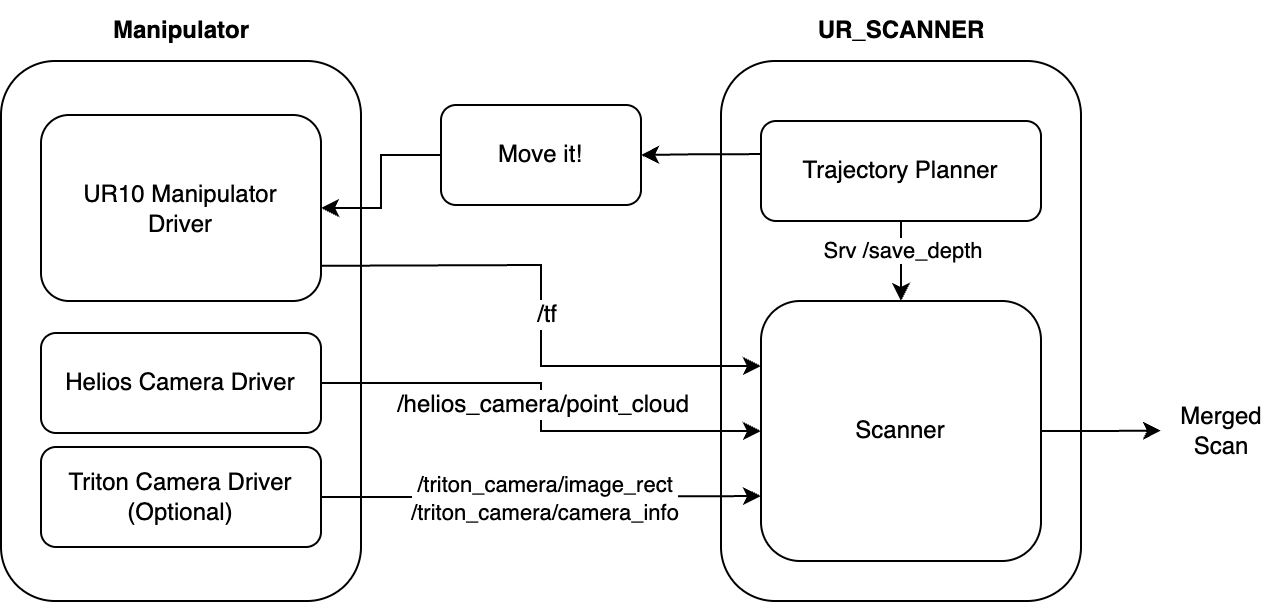

The system consists of a UR10 robotic manipulator with a Helios 2+ Time-of-Flight camera (and optionally a Triton RGB camera) mounted on its end-effector. Everything runs on ROS Noetic.

The scanning pipeline works as follows: the trajectory planner generates a zig-zag path based on the wall dimensions and the camera’s field of view, with a configurable overlap ratio between adjacent scans. The robot moves to each waypoint, pauses to let vibrations settle, then captures a depth frame. The camera pose at each position is computed via forward kinematics from the robot’s joint encoders, and each point cloud is transformed into a common base frame and merged.

We adopted a pause-and-capture strategy rather than continuous scanning, because frame accumulation during motion caused severe motion blur in the point clouds.

Optimizing Depth Quality

Before worrying about multi-scan merging, we first needed to understand the sensor itself. A significant part of the project was systematically characterizing the Helios ToF cameras to find the best operating parameters.

Camera selection. We compared the Helios 1 and Helios 2+ on the same flat wall target. Helios 2+ showed better calibration, less edge distortion, and significantly better precision (~5mm vs ~12mm at 2σ), making it the clear choice.

Image accumulation. Averaging multiple depth frames reduces noise substantially. Precision improved dramatically from 1 to 4 accumulated frames, with diminishing returns beyond that. We settled on ≥4 frames as a practical sweet spot.

Intensity-based filtering. The Helios cameras report per-pixel intensity values that correlate with measurement confidence. By keeping only the top 50% of high-intensity points, we achieved sub-millimeter precision — at the cost of losing peripheral regions of the scan. This turned out to be one of the most effective quality improvements.

Distance. Depth quality degrades with distance due to beam divergence, but you get wider coverage. Staying within 2m kept precision under 5mm.

The Key Finding: Pose Accuracy > Registration

Here’s the most interesting result of the project. When we merged scans using only the robot’s forward kinematics — no registration at all — the results were remarkably good. Brick edges and mortar lines aligned precisely across adjacent scans. The robot’s joint encoders alone provided sufficient pose accuracy for high-quality merging.

But what happens when poses are slightly wrong, as they would be in a real deployment with encoder drift, joint flexing, or a shifted base? We simulated this by injecting controlled noise (up to 5cm translation and 5° rotation) into the ground-truth transforms, then tried to recover the correct alignment using several registration methods: ICP (point-to-point and point-to-plane), KISS-ICP, and FilterReg.

None of them worked reliably. On featureless walls, the registration often made things worse — converging to incorrect local minima because the surface provides no distinctive correspondences. The algorithms could correct depth (along the camera’s optical axis) to some extent, but lateral alignment and rotation were essentially unconstrained on a flat plane.

This leads to the project’s central takeaway: for featureless surfaces, getting the camera pose right in the first place matters far more than trying to fix it afterwards with registration. In practice, this means investing in better kinematic calibration, external tracking, or multi-modal sensing rather than relying on post-hoc point cloud alignment.

What I Learned

This was my first time working with a real robotic system end-to-end — from hardware setup and ROS integration to sensor characterization and algorithm evaluation. A few things stood out.

Systematic sensor characterization pays off. Before touching any algorithm, we spent weeks just understanding the camera’s behavior under different conditions. This felt slow at the time, but it meant every downstream decision was grounded in real data rather than assumptions. The intensity filtering trick alone transformed the quality of our scans.

Negative results are results. The fact that ICP and friends failed on featureless walls isn’t a failure of the project — it’s the main finding. It quantifies something practitioners often suspect but rarely validate rigorously: registration algorithms need features, and when there aren’t any, you need a different strategy entirely.

Working with real hardware changes your perspective. Simulation lets you ignore vibration settling time, motion blur during frame accumulation, cable management on a robot arm, and a dozen other things that matter enormously in practice. Dealing with these taught me more about systems thinking than any course.